Navigating the Challenges of Predicting EV Fast Charging Patterns

The complexity of electric vehicle (EV) charging, particularly with direct current fast chargers (DCFC), presents a significant challenge for predicting charging profiles. These profiles, which show the charging power based on the battery’s state-of-charge (SoC), vary widely due to numerous factors. An EV might charge differently depending on the initial SoC or the power rating of the connector used. Generally, batteries charge faster at lower SoCs and slow as they near full capacity. Temperature also affects charging speed, with lower temperatures typically reducing charging rates. Additionally, different EV models, each with unique hardware and battery management systems, can exhibit distinct charging behaviors. Other influential factors include the battery state-of-health (SoH), connector types, and grid conditions.

To gain meaningful insights into these charging behaviors, a comprehensive dataset is essential. This study utilized a dataset of 909,135 high-quality DCFC sessions collected from 612 chargers across northwestern Europe between November 2021 and July 2024. This dataset includes time series data on SoC and charging power, alongside information such as connector power rating, session duration, total energy delivered, and charging station location.

The dataset, depicted in Fig. 1, covers a wide range of EV models and charging conditions, with connectors rated from 50 kW to 360 kW. The data reveal substantial variability in charging sessions concerning SoC changes and session durations, complicating direct comparisons with lab-based reference data. Most sessions start with SoCs between 10% and 60% and end near full capacity, though many shorter top-up events are also present. Drivers’ varying charging habits reflect their diverse preferences and needs, with some charging close to full capacity due to range anxiety and others stopping earlier due to time constraints or battery health concerns. Charging duration varies widely, from brief top-ups of just over 10 minutes to over an hour for near-full charges. A marked slowdown in charging is observed as batteries approach full capacity, with sessions targeting over 90% SoC taking significantly longer than those ending between 80% and 89% SoC. Sessions with less than a 10% change in SoC were excluded from the analysis.

a The geographic distribution of the charging stations from which the charge curve dataset was collected. Stations located in the Netherlands did not report cumulative energy delivered and are therefore excluded from the quantitative analyses in panels (b–e). b A diverse array of charging stations with rated connector power ranging from 50 kW to 360 kW are included in the dataset, with the primary connector types being Type 2 Combined Charging System (CCS) and CHAdeMO. c Most charging sessions analysed in this study originated from Great Britain and Germany, with fewer than 1% of sessions coming from the Netherlands. The dataset was collected from 2011 to 2024, and covers a large range of sessions with various levels of delivered energies and connector power ratings. It should be noted that these describe only the subset of data examined here and should not be taken as representative of Shell’s overall charging operations. d The distribution of estimated battery capacity across different connector power ratings. Violin plots show the data distribution (kernel density), with embedded box plots indicating the median (centre line), interquartile range (box limits), and whiskers (1.5 × interquartile range). e The percentage of sessions as a function of starting and stopping state-of-charge (SoC), as well as the average session duration of these sessions. Note that for plotting purposes, these are computed from a fraction of the dataset due to minor inconsistencies in recorded starting or stopping SoC values in some sessions.

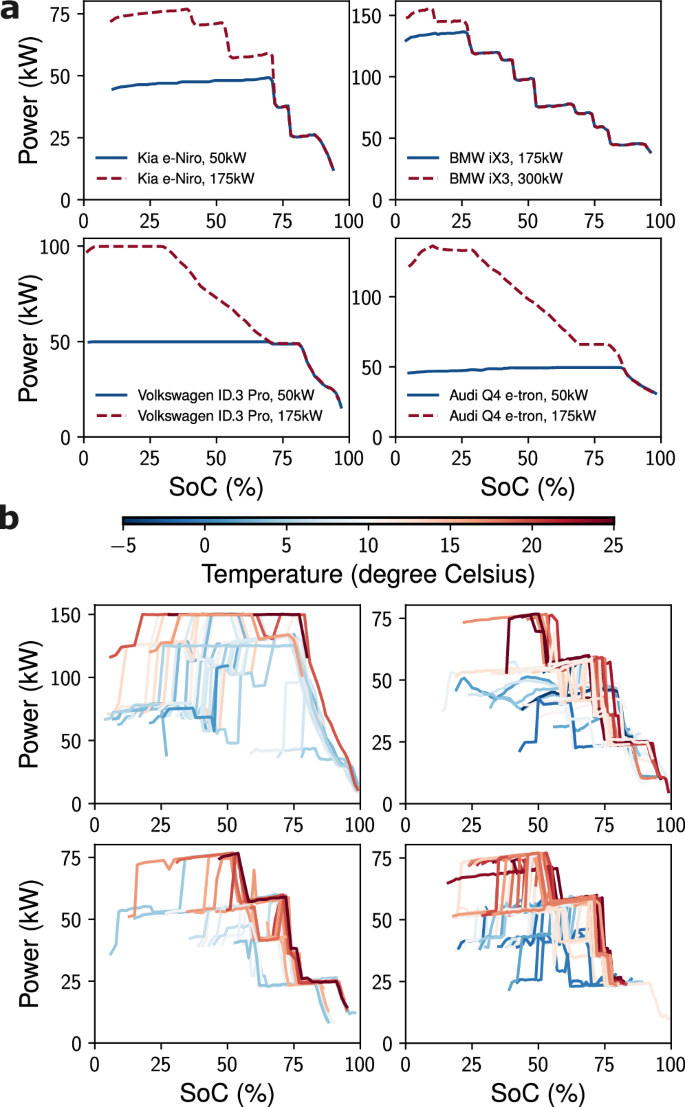

Further insights are provided by Fig. 2, which compares controlled laboratory charging profiles with real-world data. Laboratory conditions at Shell Technology Center show that power typically remains constant or rises until a certain SoC threshold, after which it declines. In contrast, real-world profiles vary greatly due to factors like starting SoC and ambient temperature. While stations don’t record battery temperature, ambient temperatures were inferred from ERA5 reanalysis data39, showing colder conditions slow charging. The dataset spans a broad range of climates from -14°C to 35°C.

a Reference charging profiles of Kia e-Niro, BMW iX3, Volkswagen ID.3 Pro, and Audi Q4 e-tron measured in controlled laboratory sessions under ideal charging conditions of ambient temperature of 30 °C with Combined Charging System (CCS) chargers of different rated connector powers. b Each subplot represents the charging profiles of a single anonymous user (and likely the same EV) using the same connector type and power rating under different environmental ambient temperatures. The sampled charging profiles demonstrate that real-world charging patterns are highly diverse and irregular, and dependent on many factors including starting SoC and ambient temperature.

For a predictive model to be effective, it must generalize across various EV models and support different connector types and power ratings. It should capture the impact of starting SoC, battery capacity, and environmental conditions on charging behavior while using data readily available at charging stations. The model also needs to handle large, noisy datasets and adapt to real-time data, making predictions even with minimal input. This research introduces a deep learning framework designed to meet these needs, providing a practical solution for predicting charging profiles while managing the inherent uncertainties in EV charging scenarios.

Advanced Anomaly Detection and Predictive Modeling for EV Charging

The deep learning framework is developed for real-world application, with input data derived from anonymized charging station information. It comprises two main components: a β-variational autoencoder (β-VAE) for anomaly detection and a predictive model for charging profiles. Charging data is structured as multivariate time series of charging power and SoC, supplemented with static covariates such as starting SoC, connector power and type, estimated EV battery capacity, and ambient temperature. While the anomaly detection model requires complete profiles to establish normal behavior, the prediction model can operate with partial profiles in real-time deployment, allowing for adaptive forecasting. The selected static covariates are easily accessible, with starting SoC, connector type, and power rating provided by the charger. EV battery capacities can be estimated dynamically or obtained through image recognition, and ambient temperatures can be measured directly. In this study, battery capacities were estimated using the total energy delivered and SoC changes, while ambient temperatures were sourced from ERA5 reanalysis data. Notably, the framework is optimized to include estimated EV battery capacity and ambient temperature, but it remains effective without these features, with only slight reductions in accuracy. Further details are in Supplementary Fig. 6. An illustration of the proposed workflow, including the anomaly detection and charging profile prediction models, is shown in Fig. 3.

a A high-level illustration of the AI-based system for direct current fast charging (DCFC). Charging session data are continuously collected from charging stations, aggregated on cloud servers, and delivered to high-performance computing (HPC) platforms for large-scale model training. The resulting trained models are deployed via cloud servers to provide real-time charging profile and time predictions, together with anomaly detection. b A schematic of the beta-variational autoencoder (β-VAE)-based anomaly detection model, which identifies irregularities in charging sessions by reconstructing the original power/state-of-charge (SoC) charging profile and evaluating the associated reconstruction error. RevIN denotes reversible instance normalisation, and long short-term memory (LSTM) is used for sequence modelling. c A schematic of the probabilistic charging profile prediction model, which predicts the quantiles of a full charging profile from partial inputs. Gated linear unit (GLU), gated residual network (GRN) and variable selection network (VSN), are key architectural components of the anomaly detection and charging profile prediction models, with additional details provided in Supplementary Fig. 1.

Real-world EV charging data is often noisy, with potential errors from various sources like connector issues, firmware interruptions, or data recording errors. While predictive models must handle typical noise, abnormal sessions can skew results if not properly managed. Since faults aren’t explicitly labeled, a β-VAE-based anomaly detection model is trained on complete profiles to filter out anomalies. The model, detailed in Fig. 3b, learns a latent representation of normal profiles by minimizing reconstruction and Kullback-Leibler (KL) divergence losses. Each session is scored for reconstruction error, with abnormal profiles flagged when errors exceed a statistical threshold, indicating deviations from normal charging behavior. Examples of detected anomalies are shown in Fig. 4a.

a Examples of irregular power/state-of-charge (SoC) charging profiles detected by the anomaly detection model, with reconstruction errors within the top 1% or where the computed charging time from the reconstructed charging profile differed from the ground truth by more than 15 min. b Quantile predictions for three sessions (top, middle, bottom rows) from the charging profile prediction model using varying numbers of known points on the charging profile as input.

Once anomalies are filtered, the charging profile prediction model is trained on the refined dataset using a self-supervised learning approach. This involves predicting full profiles from partial data, with segments intentionally hidden during training, allowing the model to handle incomplete inputs. It can make accurate predictions with minimal data, adjusting in real-time as new data arrives, as shown in Fig. 4b. The model uses a quantile loss function to provide probabilistic predictions, accommodating a variety of potential charging behaviors and enhancing robustness. Fig. 4b illustrates the model’s ability to manage uncertainty in complex profiles, with quantile ranges capturing outcome variability. While initial uncertainty is high, it decreases as more data is provided. Performance improves notably in simpler cases, where quantile ranges are narrower, even with limited input data.

Both models incorporate components from the temporal fusion transformer (TFT), a leading time-series forecasting model developed by Lim et al.40. Static covariates are encoded as context vectors to influence temporal dynamics. Variable selection networks assign adaptive weights to these covariates, enhancing interpretability. RevIN layers in the anomaly detection model address distribution shifts, ensuring consistent scaling across sessions41. LSTM layers capture sequential dependencies, while multi-head attention layers in the prediction model focus on different input sequence parts, boosting interpretability and predictive power31.

Achieving Reliable Predictions Post-Plug-In

Sessions deemed abnormal were identified by reconstruction errors in the top percentile and instances where reconstructed charging durations exceeded actual values by over 15 minutes. This temporal criterion addresses cases where power measurements drop to zero, typically indicating sensor faults or disconnection events. These cases could inflate predicted charging times and skew model performance. By excluding these anomalous sessions, training and evaluation focus only on physically plausible charging behaviors, while preserving most of the meaningful data. This minimal exclusion of 9,334 sessions (1.02% of the full dataset) was carefully chosen to remove outliers without affecting the data distribution. Post-exclusion, the prediction model was trained on the filtered dataset and evaluated on two independent test sets. The first test set comprises contemporaneous sessions from the same timeframe as the training data, while the second set includes sessions collected immediately after the training period, simulating real-world operational conditions and assessing near-future predictive performance. Distributional differences between the training and test sets are visualized in Supplementary Figs. 3 and 4 using latent representations from the β-VAE model.

Fig. 5 details the performance of the prediction model. Results correspond to an ensemble model averaging predictions from three independently trained instances of the TFT model, each initialized with different random seeds and data splits. While the best individual model achieved slightly higher accuracy on some metrics, the ensemble was analyzed for greater robustness and representative behavior under varied conditions. Training and validation loss curves for the individual TFT model runs are in Supplementary Fig. 2.

Charging times were calculated based on either the completion of the charging session or reaching 80% SoC, whichever came first. Analysis was confined to sessions with a strictly greater than 15% change in SoC. a The charging profile prediction model’s performance on predicting the power-SoC charging profile and charging time for different numbers of known points of the charging profile as input, evaluated on the two test sets. Normalised mean absolute error (MAE) was computed as the MAE of predicted power normalised by connector power rating. Box plots show the median (centre line), mean (triangles), interquartile range (box limits), and whiskers (1.5 × the interquartile range). b Scatter plot with density overlays comparing median predicted charging times against ground truth across different numbers of input points. The results combine data from both test sets. c The accuracy ranges of the test set sessions, evaluated on both test sets. d The percentage number of uncertain sessions for different numbers of input points across both test sets. e The median time required to accumulate a specific number of points on the charging profile, with error bars representing the interquartile range. f Distribution analysis of absolute errors in predicting charging time, across varying numbers of input points on the charging profile, incorporating results from both test sets.

The model’s ability to predict charging profiles and times is assessed for both test sets in Fig. 5a, comparing median predictions to actual values. Although performance was marginally better in the first test set, the difference was minor. The model achieved about 90% relative accuracy for both sets when predicting charging time from a single input point. The mean absolute error of less than 2.5 minutes is reported separately in Supplementary Fig. 5. This error value is the lower bound of the model’s performance using relative error, with performance also evaluated using absolute-error metrics like MAE in charging time and charging curve accuracy across input lengths from 1 to 15 points. Accuracy improves as more data becomes available, enabling operational actions like anticipating charger availability, assessing session completion times, and giving early completion time estimates to drivers. Even with minimal input, the model explained 91% of charging time prediction variance, as seen in Fig. 5b. With more known points, the model adapts quickly, improving accuracy and narrowing uncertainty. Fig. 5c,d shows uncertainty decreases from 15% with one point to less than 2% with five or more points. Predictions are uncertain if the charging time difference between the 10% and 90% quantile predictions exceeds 20 minutes or 50% of the median time. With six known points, corresponding to about five minutes of charging, 90% of sessions achieve more than 90% accuracy in time prediction. With 15 points, only 1.45% of sessions fall below 90% accuracy, and just 0.79% below 80%.

Charging profile data is typically logged at one-minute intervals, but the model updates its predictions when the reported SoC increases by at least 1%, as defined by the Open Charge Point Protocol (OCPP). Many operators use a 60-second logging interval to balance data volume and utility, but this can be adjusted. For instance, busier sites might report more frequently for more accurate predictions. Because DC fast charging is non-linear, SoC update timing varies across sessions. Fig. 5e shows the median time to accumulate a given number of SoC points. Early in charging, multiple updates occur within minutes, while later stages require longer due to reduced power. This results in a variable temporal resolution governed by charging dynamics. Model updates may occur every minute early in a session, allowing timely and accurate predictions as more data is available. As charging slows and updates become less frequent, predictions typically converge, reducing the need for further refinement. Fig. 5f depicts absolute error distributions, while relative errors are in Supplementary Fig. 5c. Initially, the model slightly underestimates charging time, but as more data is received, it refines accuracy. Error distributions narrow, with most sessions achieving prediction errors within ±5% and absolute errors under one minute. Sessions with persistent high uncertainty might indicate abnormal charging behavior not filtered by the anomaly detection model, serving as a real-time feature for anomaly detection during deployment.

Extracting Insights from EV Charging Data

Understanding the mechanics of machine learning models can offer valuable insights into real-world EV charging behaviors. This is achieved by visualizing the anomaly detection model’s learned low-dimensional latent embeddings and analyzing the prediction model’s static covariate influences. Real-world charging data was first screened against reference profiles generated by Shell under controlled lab conditions. These reference curves cover a range of EV brands, models, connector power ratings, connector types, and ambient temperatures. Using Shell’s curve-matching software, real-world profiles were matched to reference curves. Around 15,000 profiles with strong matches were compressed into latent representations by the β-VAE encoder, projected onto a 2D plane using t-SNE, and visualized in Fig. 6a. Distinct clusters form in the latent space, with EVs of the same brand and model generally grouping closely. The β-VAE encoder effectively preserves relationships defined by the curve-matching process, capturing meaningful patterns. The Nissan Ariya/Leaf grouping is less distinct due to overlapping reference profiles with other brands, as shown in Fig. 6b. RevIN layers in the β-VAE model group profiles with the same shape but different scales, as demonstrated by the unified clustering of Volkswagen ID.3 Pro and Pro S sessions. This scale-invariance arises from RevIN’s dynamic normalization across sessions, allowing the latent space to prioritize shape over magnitude, essential when dealing with varied infrastructure and battery capacities.

Box plots show the median (centre line), interquartile range (box limits), and whiskers (1.5 × the interquartile range). Results integrate data from both test sets. a t-distributed stochastic neighbour embedding (t-SNE) visualisation of the latent representations of charging data. Each point represents a full power/state-of-charge (SoC) charging profile, coloured according to its closest match with a reference profile obtained through charging under ideal laboratory conditions. b Zoomed-in views of the clusters formed in the t-SNE plot. The black curves, rendered with partial transparency, denote multiple charging profiles, and their overlap yields a grey appearance through cumulative opacity. c Performance of the temporal fusion transformer (TFT)-based charging profile prediction model compared against various baselines when evaluated on a combined dataset of test sets one and two. For TFT, three runs are shown directly as the lower error bar, bar height, and upper error bar; other models show a single run. d Performance of the charging profile prediction model trained with different configurations of static covariates, evaluated with normalised mean absolute error (MAE) in prediction. e Relative importance of the static covariates derived from the charging profile prediction model’s variable selection networks. The violin plots represent scaled kernel density estimates of relative feature importance, with overlaid box plots indicating medians and interquartile ranges for each static covariate. f Performance of the charging profile prediction model trained with three configurations of battery capacity: omitted, used as a continuous covariate, and used as a binned categorical covariate. For geographical generalisation, the capacity-omitted model is also evaluated on the Netherlands dataset, which lacks this feature.

Fig. 6c presents a baseline comparison and ablation study of model architecture. The TFT achieves the lowest normalized MAE, outperforming RNN, GRU, LSTM, and the vanilla Transformer on a combined test set dataset. Two reduced variants are evaluated: removing multi-head attention and feed-forward linear modules yields the VSN-LSTM, while removing recurrence results in a pure Transformer. TFT’s superior performance over these variants indicates that attention and recurrence offer complementary advantages for learning charging profile representations. Given the self-supervised task and variable input sequence lengths, comparison with traditional statistical learning methods is inapplicable, as they typically assume fixed-length feature vectors and supervised targets. TFT was evaluated across three independent runs, despite the computational cost of 72 NVIDIA A100 GPU hours per run. This robustness check was selectively applied to validate stability and generalizability, while baselines were evaluated once for efficiency.

The role of static covariates is examined through feature ablation studies, with results in Fig. 6d. Experiments assess model performance when trained with all five covariates versus versions with specific covariates removed. Removing estimated battery capacity or ambient temperature slightly degrades predictive accuracy, particularly with limited time series input. This effect is more pronounced for capacity, crucial in early sessions. As more temporal data becomes available, performance differences diminish, suggesting static covariates are particularly valuable early on, compensating for insufficient time-dependent structure. Supplementary Fig. 6 provides further methodological details. To understand the model’s prioritization of inputs, relative importance of the five covariates was examined using VSNs within the TFT architecture. These networks dynamically assess and weight features at inference time, suppressing irrelevant inputs while amplifying predictive ones. Fig. 6e shows distribution of relative importance scores. All five covariates contribute meaningfully, with estimated capacity emerging as the most influential, followed by connector power rating. Starting SoC importance varies across sessions, with higher weights for extreme values. Ambient temperature and connector type play less prominent roles, though their influence remains non-trivial. These scores reflect internal priorities rather than intrinsic physical relevance. Lower importance scores may indicate predictable effects, captured indirectly through correlations with time series features. Importance distributions are specific to the dataset and may vary in other scenarios.

To assess geographic generalization, the model was evaluated on a test set of sessions from the Netherlands, not included in training. This dataset lacked battery capacity information, so the model trained without estimated capacity was used. As shown in Fig. 6f, the model achieved comparable performance on the Netherlands data and primary test sets from the UK and Germany, indicating robustness to geographic distribution shifts within Western Europe, even with missing covariates. Fig. 6f also presents an ablation study on reducing estimated capacity granularity. Using binned categories yielded intermediate performance, outperforming the model without capacity but underperforming the continuous variant. These differences suggest estimated capacity conveys more than nominal pack size, potentially encoding battery-specific characteristics like cell chemistry or charging rate limitations. As the only systematically varying static input, it likely acts as a proxy for EV identity, enabling the model to infer latent structural or electrochemical differences. While these associations can’t be directly verified, performance degradation upon discretization supports the view that preserving continuous representation allows the model to exploit fine-grained distinctions relevant to charging behavior.

Original Story at www.nature.com